Prompt engineering is a relatively new discipline and is an integral facet of generative artificial intelligence (AI), which is revolutionizing our interaction with technology. This innovative discipline is centred on the meticulous design, refinement, and optimization of prompts and underlying data structures. By steering AI systems towards specific outputs, Prompt Engineering is key to seamless human-AI interaction.

As AI integrates deeper into our daily lives, the importance of Prompt Engineering in mediating our engagement with technology is undeniable. Our interactions with virtual assistants, chatbots, and voice-activated devices are heavily influenced by AI systems, thanks to advancements in GPT-3 Models and subsequent enhancements in GP-3.5 and GPT-4.

A Simplified Approach to Defining Prompt Engineering

The Prompt is the Sole Input

When interacting with Generative AI Models such as large language models (LLMs), the prompt is the only thing that gets input into the AI system. The LLM takes this text prompt and generates outputs based solely on it.

Unlike humans, LLMs don't have inherent skills, common sense or the ability to fill in gaps in communication. Their world begins and ends with the prompt. The prompt defines the bounds of possibility for the AI system. Understanding the centrality of prompts is key to steering these powerful technologies toward benevolent ends.

Defining Prompt Engineering

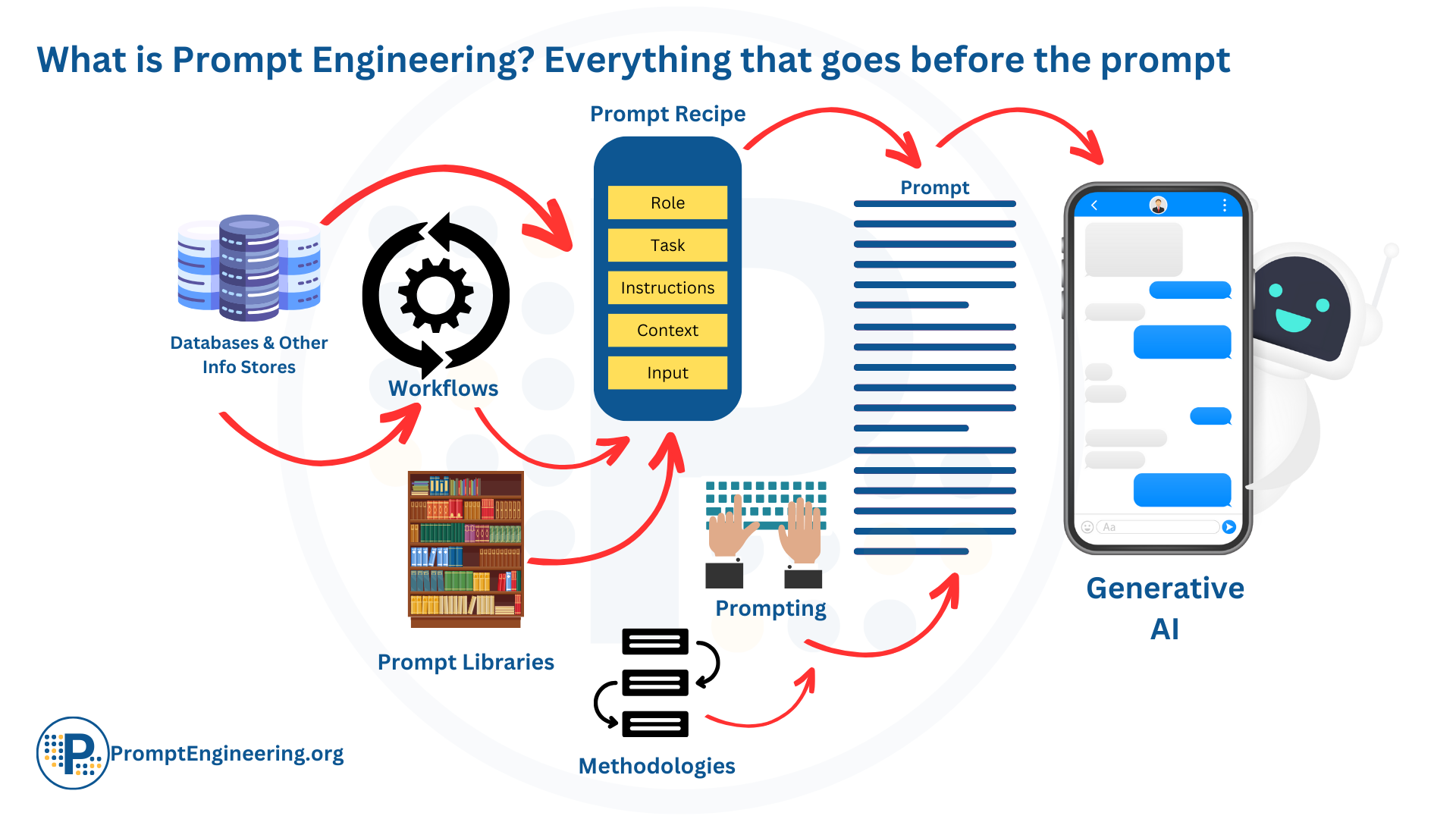

Given that the prompt is the singular input channel to large language models, prompt engineering can be defined as:

Prompt Engineering can be thought of as any process that contributes to the development of a well-crafted prompt to generate quality, useful outputs from an AI system.

This expansive definition encompasses the full spectrum of prompting approaches:

- Manual prompt crafting methods like brainstorming and iterating on phrasing

- Leveraging prompt libraries and recipes curated by the community

- Programmatic techniques like retrieval augmented generation and semantic search, embeddings and so on

- Methodological frameworks like chain-of-thought prompting and conversational prompting

- Tools and interfaces that assist in prompt formulation and analysis

- The growing body of methods, techniques and technologies for adding information and data to and crafting prompts

Essentially, anything that helps formulate and refine the textual prompt to unlock an AI's capabilities falls under the umbrella of prompt engineering. It is an indispensable meta-skill for utilizing the power of language models. Just as the prompt is the sole input to the AI, prompt engineering is the sole shaper of that input. Mastering the multifaceted art of prompt engineering is key to steering AI toward benevolent ends.

Unlock the True Potential of AI with Prompt Engineering

Prompt Engineering has emerged as the linchpin in the evolving human-AI relationship, making communication with technology more natural and intuitive. It oversees the intricate interaction cycle between humans and AI, focusing on the methodical design and refinement of prompts to enable precise AI outputs.

The Emergence and Evolution of Prompt Engineering

Prompt Engineering was born from the necessity of better communication with AI systems. The process of prompt optimization, which took form over time, became critical in getting the desired outputs. With the rising demand for sophisticated AI systems, the relevance of Prompt Engineering continues to amplify. This dynamic field is projected to keep evolving as novel techniques and technologies come to the fore.

The Intricacies of Prompt Engineering

Far from merely crafting and implementing prompts, Prompt Engineering is a multifaceted discipline with a requirement for deep understanding of the principles and methodologies that drive effective prompt design. From creating effective prompts to scrutinizing inputs and database additions, a prompt engineer's role is far-reaching.

Prompt Engineering is an emerging field that still lacks universally accepted definitions or standards. This often causes confusion for newcomers and seasoned professionals alike. However, as advanced AI systems continue to gain traction, Prompt Engineering will only grow in importance. It's a fundamental aspect of AI development, continually adapting to new challenges and technological breakthroughs.

Today, Prompt Engineering stands at the forefront of AI development, crucially adapting as new challenges arise. As this field continues to expand and evolve, the role of prompt engineers in shaping our interactions with technology will undoubtedly become even more significant.

The world of Artificial Intelligence (AI) has welcomed a fresh, ever-evolving field—Prompt Engineering. With its nascent emergence, interpretations of this discipline can differ widely.

Traditionally, one might associate Prompt Engineering simply with the creation and implementation of prompts. However, this perception barely scratches the surface of this multifaceted discipline's depth.

In reality, Prompt Engineering transcends the simplistic notion of "writing a blog post on..." This discipline is a sophisticated branch of AI, demanding comprehensive knowledge of principles that underpin effective prompt design.

As an experienced prompt engineer, I've encountered a prevailing misunderstanding that Prompt Engineering revolves merely around sentence construction, devoid of methodological, systematic, or scientific foundations. This article aims to debunk this myth, offering a precise understanding of Prompt Engineering's vast scope.

This domain encompasses numerous activities, ranging from developing effective prompts to meticulously selecting AI inputs and database additions. To ensure the AI delivers desired results, an in-depth grasp of various factors influencing the efficacy and impact of prompts is quintessential in Prompt Engineering.

Key Elements of Prompt Engineering

Prompting in Prompt Engineering: The art of developing suitable commands that an AI system can interpret and respond to accurately is pivotal in Prompt Engineering. Designing such prompts, usually articulated in conventional human language, drives pertinent and precise AI responses.

The design considerations generally follow the rules of normal human language, involving:

- Clarity: Unambiguous prompts.

- Context: Adequate context within the prompt for AI guidance.

- Precision: Targeted information or desired output.

- Adaptability: Flexibility with various AI models.

Expanding the AI's Knowledge Base in Prompt Engineering: This process involves teaching the AI to produce necessary outputs using specific data types, enhancing its outputs progressively. In the realm of Prompt Engineering, augmenting an AI's knowledge base can occur at several junctures:

- Knowledge Addition through Prompt in Prompt Engineering: This approach involves the direct input of information via the prompt. Providing examples within the prompt and implementing strategies like few-shot learning (FSL) are integral parts of this method in Prompt Engineering.

- Layered Knowledge Addition in Prompt Engineering: This technique revolves around creating a supplementary layer that sits atop the main database or model. The specifics of this method largely depend on the type of AI model in use, though some common practices include:

- Creating Database Checkpoints: A particularly beneficial strategy for Text-to-image models such as Stable Diffusion within Prompt Engineering.

- Fine-tuning: This process involves tweaking the AI system to enhance its outputs. Changes could include parameter modifications, alterations in the training data, or prompt changes, especially relevant for modern Language Models in Prompt Engineering.

- Embedding: This practice is all about presenting data in a manner that the AI system can comprehend, boosting its ability to produce more precise and relevant outputs, which is an essential part of Prompt Engineering.

By optimizing these processes, Prompt Engineering plays a critical role in refining and expanding the knowledge base of AI systems, paving the way for more effective and accurate artificial intelligence.

Prompt Engineering and Library Maintenance: A well-maintained library of tested and optimized prompts for various AI models boosts efficiency and facilitates knowledge sharing among prompt engineers.

Prompt Optimization, Evaluation, and Categorization in Prompt Engineering: Routine prompt optimization ensures that the prompts remain up-to-date and well-suited for the latest AI models.

Unlocking AI systems' full potential in Prompt Engineering extends beyond mere prompting. It demands continuous exploration of innovative theories and concepts. Cutting-edge techniques such as Chain of Thought Prompting, Self Consistency Prompting, and Tree of Thought Prompting amplify efficiency in generating AI prompts.

As AI systems become increasingly integrated into our daily lives, the role of Prompt Engineering becomes more vital. Its applications cut across diverse sectors, from healthcare and education to business, securing its place as a cornerstone of our interactions with AI.

Exploration of Essential Prompt Engineering Techniques and Concepts

In the rapidly evolving landscape of Artificial Intelligence (AI), mastering key techniques of Prompt Engineering has become increasingly vital. This segment explores these core methodologies within the scope of language models, specifically examining few-shot and zero-shot prompting, the application of semantic embeddings, and the role of fine-tuning in enhancing model responses. These techniques are pivotal in operating and optimizing the performance of large language models like GPT-3 and GPT-4, propelling advancements in natural language processing tasks.

The Concept of Zero-Shot Prompting

Significant language models such as GPT-4 have revolutionized the manner in which natural language processing tasks are addressed. A standout feature of these models is their capacity for zero-shot learning, indicating that the models can comprehend and perform tasks without any explicit examples of the required behavior. This discussion will delve into the notion of zero-shot prompting and will include unique instances to demonstrate its potential.

The Concept of Few-Shot Prompting in Prompt Engineering

Few-shot prompting plays a vital role in augmenting the performance of extensive language models on intricate tasks by offering demonstrations. However, it exhibits certain constraints when handling specific logical problems, thereby implying the necessity for sophisticated prompt engineering and alternative techniques like chain-of-thought prompting.

Despite the impressive outcomes of zero-shot capabilities, few-shot prompting has surfaced as a more efficacious strategy to navigate complicated tasks by deploying varying quantities of demonstrations, which may include 1-shot, 3-shot, 5-shot, and more.

Understanding Few-Shot Prompting: The Few-shot prompting, also termed Few-shot learning, technique aids extensive language models (LLMs), including GPT-3, in generating the sought-after outputs by supplying them with select instances of input-output pairings. Although few-shot prompting has demonstrated encouraging outcomes, it does exhibit inherent constraints. This procedure facilitates in-context learning, thereby conditioning the model via examples and steering it to yield superior responses.

Semantic Embeddings/Vector Database in Prompt Engineering

Semantic embeddings are numerical vector depictions of text which encapsulate the semantic implication of words or phrases. A comparison and analysis of these vectors can reveal the disparities and commonalities between textual elements.

The use of semantic embeddings in search enables the rapid and efficient acquisition of pertinent information, especially in substantial datasets. Semantic search offers several advantages over fine-tuning, such as increased search speeds, decreased computational expenses, and the avoidance of confabulation or the fabrication of facts. Consequently, when the goal is to extract specific knowledge from within a model, semantic search is typically the preferred choice.

These embeddings have found use in a variety of fields, including recommendation engines, search functions, and text categorization. For instance, while creating a movie recommendation engine for a streaming service, embeddings can determine movies with comparable themes or genres based on their textual descriptions. By expressing these descriptions as vectors, the engine can measure the distances between them and suggest movies that are closely located within the vector space, thereby ensuring a more precise and pertinent user experience.

LLM Fine-Tuning: Augmenting Model Reactions in Prompt Engineering

The process of fine-tuning is used to boost the performance of pre-trained models, like chatbots. By offering examples and tweaking the model's parameters, fine-tuning allows the model to yield more precise and contextually appropriate responses for specific tasks. These tasks can encompass chatbot dialogues, code generation, and question formulation, aligning more closely with the intended output. This process can be compared to a neural network modifying its weights during training.

For example, in the context of customer service chatbots, fine-tuning can improve the chatbot's comprehension of industry-specific terminologies or slang, resulting in more accurate and relevant responses to customer queries.

As a type of transfer learning, fine-tuning modifies a pre-trained model to undertake new tasks without necessitating extensive retraining. The process involves slight changes to the model's parameters, enabling it to perform the target task more effectively.

However, fine-tuning extensive language models (such as GPT-3) presents its own unique challenges. A prevalent misunderstanding is that fine-tuning will empower the model to acquire new information. However, it actually imparts new tasks or patterns to the model, not new knowledge. Moreover, fine-tuning can be time-consuming, intricate, and costly, thereby limiting its scalability and practicality for a multitude of use cases.

Chain of Thought Prompting in Prompt Engineering

Chain of Thought Prompting, commonly known as CoT prompting, is an innovative technique that assists language models in managing multifaceted reasoning of complex tasks that cannot be efficiently addressed with conventional prompting methodologies. The key to this approach lies in the decomposition of multi-step problems into individual intermediate steps.

Implementing CoT prompting often involves the inclusion of lines such as "let's work this out in a step-by-step way to make sure we have the right answer" or similar statements in the prompt. This technique ensures a systematic progression through the task, enabling the model to better navigate complex problems. By focusing on a thorough step-by-step approach, CoT prompting aids in ensuring more accurate and comprehensive outcomes. This methodology provides an additional tool in the prompt engineering toolbox, increasing the capacity of language models to handle a broader range of tasks with greater precision and effectiveness.

Knowledge Generation Prompting in Prompt Engineering

Knowledge generation prompting is a novel technique that exploits an AI model's capability to generate knowledge for addressing particular tasks. This methodology guides the model, utilizing demonstrations, towards a specific problem, where the AI can then generate the necessary knowledge to solve the given task.

This technique can be further amplified by integrating external resources such as APIs or databases, thereby augmenting the AI's problem-solving competencies.

Knowledge generation prompting comprises two fundamental stages:

- Knowledge Generation: At this stage, an assessment is conducted of what the large language model (LLM) is already aware of about the topic or subtopic, along with related ones. This process helps to understand and harness the pre-existing knowledge within the model.

- Knowledge Integration at Inference Time: During the prompting phase, which may involve direct input data, APIs, or databases, the LLM's knowledge of the topic or subtopic is supplemented. This process helps to fill gaps and provide a more comprehensive understanding of the topic, aiding in a more accurate response.

In essence, the technique of knowledge generation prompting is designed to create a synergy between what an AI model already knows and new information being provided, thereby optimizing the model's performance and enhancing its problem-solving capabilities.

Delving into Self-Consistency Prompting in Prompt Engineering

Self-consistency prompting is a sophisticated technique that expands upon the concept of Chain of Thought (CoT) prompting. The primary objective of this methodology is to enhance the naive greedy decoding, a trait of CoT prompting, by sampling a range of diverse reasoning paths and electing the most consistent responses.

This technique can significantly enhance the performance of CoT prompting in tasks that involve arithmetic and common-sense reasoning. By adopting a majority voting mechanism, the AI model can reach more accurate and reliable solutions.

In the process of self-consistency prompting, the language model is provided with multiple question-answer or input-output pairs, with each pair depicting the reasoning process behind the given answers or outputs. Subsequently, the model is prompted with these examples and tasked with solving the problem by following a similar line of reasoning. This procedure not only streamlines the process but also ensures a coherent line of thought within the model, making the technique easier to comprehend and implement while directing the model consistently and efficiently. This advanced form of prompting illustrates the ongoing development in the field of AI and further augments the problem-solving capabilities of language models.

Self-Reflection Prompting in Prompt Engineering

The self-reflection prompting technique in GPT-4 presents an innovative approach wherein the AI is capable of evaluating its own errors, learning from them, and consequently enhancing its performance. By participating in a self-sustained loop, GPT-4 can formulate improved strategies for problem-solving and achieving superior accuracy. This emergent property of self-reflection has been advanced significantly in GPT-4 in comparison to its predecessors, allowing it to continually improve its performance across a multitude of tasks.

Utilizing 'Reflexion' for iterative refinement of the current implementation facilitates the development of high-confidence solutions for problems where a concrete ground truth is elusive. This approach involves the relaxation of the success criteria to internal test accuracy, thereby empowering the AI agent to solve an array of complex tasks that are currently reliant on human intelligence.

Anticipated future applications of Reflexion could potentially enable AI agents to address a broader spectrum of problems, thus extending the frontiers of artificial intelligence and human problem-solving abilities. This self-reflective methodology exhibits the potential to significantly transform the capabilities of AI models, making them more adaptable, resilient, and effective in dealing with intricate challenges.

Understanding the Role of Priming in Prompt Engineering

Priming is an effective prompting technique where users engage with a large language model (LLM), such as ChatGPT, through a series of iterations before initiating a prompt for the expected output. This interaction could entail a variety of questions, statements, or directives, all aiming to efficiently steer the AI's comprehension and modify its behavior in alignment with the specific context of the conversation.

This procedure ensures a more comprehensive understanding of the context and user expectations by the AI model, leading to superior results. The flexibility offered by priming allows users to make alterations or introduce variations without the need to begin anew.

Priming effectively primes the AI model for the task at hand, optimizing its responsiveness to specific user requirements. This technique underscores the importance of personalized interactions and highlights the inherent adaptability of AI models in understanding and responding to diverse user needs and contexts. As such, priming represents an important addition to the suite of tools available for leveraging the capabilities of AI models in real-world scenarios.

Debunking Common Misconceptions about Prompt Engineering

We know Prompt engineering is an emerging field that plays a critical role in the development and optimization of AI systems. Despite its importance, there are many misconceptions surrounding this discipline that can create confusion and hinder a clear understanding of what prompt engineering entails. In this section, we will address and debunk some of the most common misconceptions about prompt engineering, shedding light on the true nature of this essential field and its contributions to AI development.

Let us discuss some of the most common misconceptions about prompt engineering and provide clarifications to help dispel these myths.

1. Misconception: Prompt engineers must be proficient in programming.

Explanation: While having programming skills can be beneficial, prompt engineering primarily focuses on understanding and designing prompts that elicit desired outputs from AI systems. Prompt engineers may work closely with developers, but their primary expertise lies in crafting and optimizing prompts, not programming.

2. Misconception: All prompt engineers do is type words.

Explanation: Prompt engineering goes beyond simply typing words. It involves a deep understanding of AI systems, their limitations, and the desired outcomes. Prompt engineers must consider context, intent, and the specific language model being used to create prompts that generate accurate and relevant results.

3. Misconception: Prompt engineering is an exact science.

Explanation: Prompt engineering is an evolving field, and there is no one-size-fits-all solution. Engineers often need to experiment with different approaches, fine-tune prompts, and stay up-to-date with advancements in AI to ensure optimal results.

4. Misconception: Prompt engineering only applies to text-based AI systems.

Explanation: While prompt engineering is commonly associated with text-based AI systems, it can also be applied to other AI domains, such as image recognition or natural language processing, where prompts play a critical role in guiding the AI system towards desired outputs.

5. Misconception: A good prompt will work perfectly across all AI systems.

Explanation: AI systems can have different architectures, training data, and capabilities, which means that a prompt that works well for one system may not be as effective for another. Prompt engineers must consider the specific AI system being used and tailor their prompts accordingly.

6. Misconception: Prompt engineering is not a specialized skill.

Explanation: Prompt engineering is a specialized field that requires a deep understanding of AI systems, language models, and various techniques for optimizing prompts. It is not a simple task that can be easily mastered without dedicated study and practice.

7. Misconception: Prompt engineering only focuses on creating new prompts.

Explanation: Prompt engineering involves not only creating new prompts but also refining existing ones, evaluating their effectiveness, and maintaining a library of optimized prompts. It is a continuous process of improvement and adaptation to changing AI systems and user requirements.

8. Misconception: Prompt engineering is solely about generating creative prompts.

Explanation: While creativity is an essential aspect of prompt engineering, the discipline also requires a strong analytical and problem-solving approach to ensure that the AI system generates accurate, relevant, and contextually appropriate outputs.

9. Misconception: Prompt engineering is only necessary for advanced AI systems.

Explanation: Prompt engineering is crucial for any AI system, regardless of its level of sophistication. Even simple AI systems can benefit from well-designed prompts that guide their responses and improve their overall performance.

10. Misconception: Prompt engineering can be learned overnight.

Explanation: Becoming proficient in prompt engineering requires time, practice, and a deep understanding of AI systems, language models, and the various techniques involved in crafting effective prompts. It is not a skill that can be mastered in a short amount of time.

11. Misconception: Prompt engineers work in isolation.

Explanation: Prompt engineers often collaborate with other professionals, such as developers, UX/UI designers, project managers, and domain experts, to create an AI system that meets specific requirements and delivers a seamless user experience.

12. Misconception: Prompt engineering is only relevant to the AI development stage.

Explanation: Prompt engineering plays a critical role throughout the entire AI system life cycle, from the initial design and development stages to deployment, maintenance, and continuous improvement. Prompt engineers must monitor AI system performance, user feedback, and advancements in AI to ensure the system remains relevant and accurate.

13. Misconception: There is a single "best" approach to prompt engineering.

Explanation: Prompt engineering is a dynamic and evolving field, and there is no universally accepted "best" approach. Prompt engineers must continuously adapt and experiment with different techniques and strategies to achieve optimal results based on the specific AI system and use case.

14. Misconception: Prompt engineering is only applicable to language models.

Explanation: Although prompt engineering is often associated with language models, it can also be applied to other types of AI systems, such as image generation, recommendation engines, and data analysis. The fundamental principles of designing and refining prompts to achieve desired outputs can be adapted to various AI applications.

15. Misconception: There is no need for a dedicated prompt engineer on an AI project.

Explanation: While some smaller projects may not require a dedicated prompt engineer, having a specialist who focuses on prompt engineering can significantly improve the performance, accuracy, and user experience of the AI system. Their expertise can contribute to the success of the project and help avoid common pitfalls in AI development.

16. Misconception: Prompt engineering is not a viable career path.

Explanation: As AI systems become more sophisticated and integrated into various industries, the demand for prompt engineers will continue to grow. The unique skills and expertise of prompt engineers make them valuable assets in AI development teams, and there are opportunities for career growth and specialization in this field.

17. Misconception: Prompt engineering is not a science.

Explanation: Although prompt engineering involves creativity and intuition, it is also a discipline grounded in scientific principles, methodologies, and experimentation. Prompt engineers apply rigorous testing, evaluation, and optimization techniques to refine prompts and improve AI system performance, making it a scientific endeavor.

18. Misconception: The quality of AI system outputs solely depends on the AI model.

Explanation: While the choice of AI model is a critical factor in determining the quality of the outputs, prompt engineering also plays a significant role. Well-designed prompts can help even a less sophisticated AI model produce accurate and relevant outputs, while poorly designed prompts can hinder the performance of a more advanced model. Thus, prompt engineering is a crucial aspect of ensuring AI system effectiveness.

Takeaway

Prompt Engineering is a crucial aspect of human-AI interaction and is rapidly growing as AI becomes more integrated into our daily lives.

The goal of a Prompt Engineer is to ensure that the AI system produces relevant, accurate, and in line with the desired outcome. It is more than just telling the AI to "write me an email to get a new job.." The key concepts of Prompt Engineering include prompts and prompting the AI, training the AI, developing and maintaining a prompt library, and testing, evaluation, and categorization.

With the demand for advanced AI systems growing, prompt engineering will continue to evolve and become an even more critical field. As the field continues to develop, it is important for prompt engineers to stay updated and share their knowledge and expertise to improve the accuracy and effectiveness of AI systems.