Large language models (LLMs) like GPT-4 have demonstrated remarkable proficiency in generating human-like text. However, as AI systems grow more advanced, their inner workings become increasingly complex and opaque. This has led to concerns about bias, accountability, and the "black box" nature of LLMs.

To address these issues, it can be useful to view LLMs through the lens of a familiar computing construct – the Central Processing Unit (CPU) of a computer. Much like a CPU processes instructions, an LLM processes textual prompts to produce relevant outputs. Exploring this CPU analogy provides a conceptual framework to demystify LLMs and unlock their full potential.

The LLM as a Textual CPU

Everyone knows the Central Processing Unit (CPU). It is the main component of PCs, interpreting and executing software instructions. What if we treated the LLM as some sort of processing unit?

We would find intriguing similarities and differences between these processing units. Drawing a parallel between the CPU and the LLM can shed light on the capabilities and limitations of both, opening doors to innovative synergies and applications.

Functionality & Complexity

- CPU: As the heart of any computer, the CPU processes software instructions and handles arithmetic and logic operations. It's a deterministic machine, executing low-level operations at astonishing speeds, often billions of instructions per second.

- LLM: In stark contrast, the LLM processes textual prompts, churning out human-like textual outputs. It doesn't merely process; it interprets, understands, and generates based on its vast training data. LLMs handle high-level language tasks, making their function more probabilistic than deterministic.

Input, Output, & Versatility

- CPU: Operating in a binary world, the CPU takes machine code as input and gives binary data as output. Its versatility is bound by the software compatible with its architecture.

- LLM: The LLM thrives in the world of language. It takes textual prompts and provides textual responses. Its versatility is immense, capable of generating text across a myriad of topics. However, its accuracy and relevance are tethered to its training data.

Modularity & Integration

- CPU: It doesn't operate in isolation but interacts with various computer components like RAM, GPU, and storage.

- LLM: On the other hand, the LLM primarily communicates with users or systems via text. Yet, its potential isn't confined to text alone; it can be integrated with other systems for diverse applications.

Limitations & Learning

- CPU: Every CPU has its limits, dictated by factors like clock speed, architecture, and thermal constraints. It's a machine that executes without deviation.

- LLM: The LLM's boundaries are set by its training data, model size, and inherent biases. While it doesn't "learn" during runtime, its diverse responses stem from its training, and newer versions can be trained with fresh data for enhancements.

By viewing the LLM as a "textual CPU," we underscore its pivotal role as a linguistic processing engine. Just as the CPU transforms instructions into computational outcomes, the LLM morphs textual prompts into language-based results. But the probabilistic nature of LLMs, dealing with the nuances and ambiguities of language, sets them apart from the deterministic CPUs.

The SLiCK LLM Framework

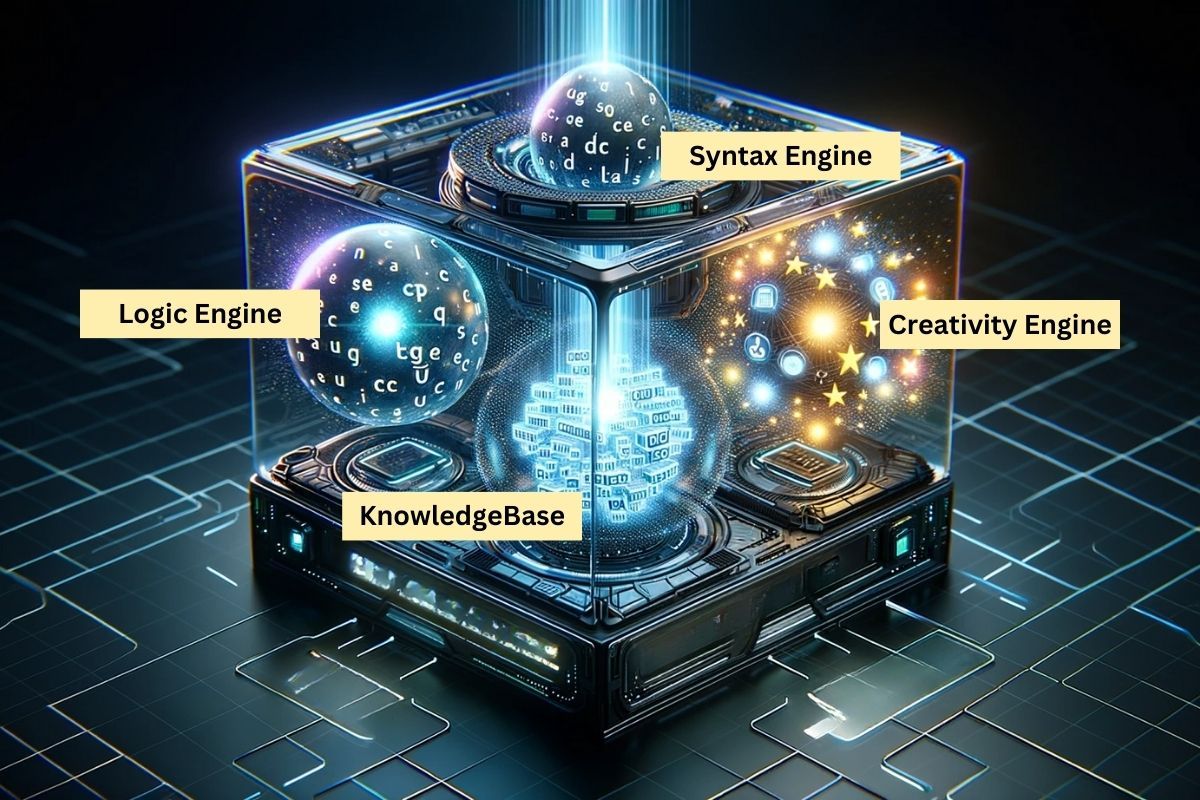

The SLiCK (Syntax Logic Creativity Knowledgebase) framework divides contemporary LLMs into two major operations - the Processing Unit and the Knowledge Base. The Processing Unit is further separated into three engines: Syntax, Creativity and Logic. The Knowledgebase, while not divided (not at this point), contains entities and relationships at its basic level to build upon.

Let's explore the Processing Unit first. The Syntax Engine oversees structural and linguistic tasks like parsing, comprehension, and quality assessment. It ensures coherence and smooth presentation. The Creativity Engine is the imaginative powerhouse, adding nuance, flair and resonance. The Logic Engine aims for accuracy, relevance and reasoning, acting as the strategic brain.

Moving to the Knowledgebase stores the information and facts the LLM uses. It consists of entities or subjects, and relationships describing the connections between entities. The Knowledgebase provides the content, while the Processing Unit determines how to use it. The knowledge base consists of permanent information but can be supplemented with transient information by way of the context window.

The Processing Unit

Much like a Central Processing Unit (CPU) in a computer, which interprets and executes instructions, the "Processing Unit" of an AI model interprets and responds to textual prompts. The CPU's architecture can be broken down into various components, each responsible for specific tasks.

The Processing Unit of an LLM can be broken down into three key components that work together to interpret prompts and generate responses:

1. The Syntax Engine: The Linguistic Architect

This oversees structural and linguistic tasks like parsing, error correction, text manipulation, comprehension, and quality assessment. It ensures outputs are coherent, grammatical, and smoothly presented.

- Text Parsing: The engine breaks down sentences to understand their grammatical components, ensuring a solid foundation for further operations.

- Structural Organization: Information is arranged logically, with ideas transitioning smoothly. This could involve categorizing similar ideas or placing crucial information at the beginning for emphasis.

- Formatting & Refinement: Adherence to stylistic guidelines is ensured, from using markdown for headings to adjusting content for readability.

- Error Correction: The engine identifies and corrects grammatical or syntactical errors, ensuring linguistic soundness.

- Comprehension: The Syntax Engine needs to extract semantics and sentiment accurately. This involves classification, summarization, and translating intent, ensuring the AI understands the underlying meanings and emotions in the text.

- Text Manipulation: The engine has capabilities like grammar error correction and word substitution, which are essential for refining and augmenting text.

- Analysis: The Syntax Engine dives deep into the text, extracting entities, determining sentiment, assessing complexity, and more. Such detailed analysis supports use cases like search engines, recommendation systems, and data extraction tools.

- Generation: The quality of text generation is paramount. The engine ensures that generated content is coherent, relevant, and contextually appropriate. The creative output's quality is also assessed to ensure it meets the standards and expectations set by the prompt.

2. The Creativity Engine: The Imaginative Powerhouse

Here lies the LLM's creative essence. From brainstorming ideas to crafting narratives and adding stylistic touches, the Creativity Engine infuses depth, nuance and flair into the content. It's about resonating with the reader, ensuring the content surprises, engages, and feels insightful.

- Idea Generation: This involves brainstorming and coming up with unique solutions or perspectives on a topic. It's the spark that initiates the creative process.

- Connective Thinking: The Creativity Unit can draw parallels or make connections between seemingly unrelated topics, enriching the content.

- Narrative Crafting: For story-driven prompts, this unit weaves narratives, developing characters, settings, and plots.

- Stylistic Touches: It can adapt to various writing styles, be it poetic, humorous, formal, or casual, giving the content a distinct voice.

- Insight Imitation: One of the key roles of this unit is to create responses that give the illusion of intuition or meaningful observation, making the AI's outputs appear deeply insightful.

- Emotional Resonance: It's vital for the unit to generate text with emotional affect. Whether it's humor, sadness, excitement, or any other emotion, the text should resonate with the reader, creating a subjective impression.

- Novelty: The Creativity Unit ensures the outputs are unique and unpredictable. It might produce content based on uncommon or random associations within its training data, leading to non-sequitur yet interesting results.

- Range and Versatility: Given the vast training corpora, the unit is capable of replicating a diverse range of topics, styles, and genres. This breadth ensures versatility in responses.

- Imagination: While the AI operates within its statistical foundations, the Creativity Unit strives to produce outputs that give an impression of playfulness, whimsy, and unbridled imagination.

- Conceptual Generation: Even though the LLM doesn't truly conceive goals, the Creativity Unit can produce aspirational, goal-driven text. It creates content that seems forward-looking and ambitious, even if it's based on patterns and not genuine conception.

It's not just about producing content but ensuring that the content resonates, surprises, and engages the reader. By focusing on elements like insight imitation, emotional resonance, and conceptual generation, the Creativity Unit pushes the boundaries of what's possible with contemporary LLMs.

3. The Logic Engine: The Deep Thinker

Deciphering intent, understanding context, ensuring relevance, and exhibiting logical reasoning are the Logic Engine's realms. It's the strategic brain, ensuring the LLM's output is accurate, relevant, and logical.

- Intent Interpretation: It's crucial for the Logic Engine to decipher the primary goal of the prompt. This involves understanding the user's objective, whether it's seeking information, advice, or any other intent.

- Contextual Understanding: The engine considers relevant background or contextual information. If a query is a follow-up or requires specific context, the Logic Engine ensures the response is contextually appropriate.

- Constraint Management: It ensures that stipulations in the prompt, such as avoiding certain topics or sticking to a specific format, are adhered to.

- Relevance & Accuracy: The Logic Engine cross-references the generated content against the knowledge base to ensure factual accuracy and contextual relevance.

- Logical Reasoning: A key function is to evaluate and produce responses that showcase coherent, valid, and sound reasoning. It should be able to work within given premises or constraints and maintain a low error rate.

- Language Use: The engine gauges its outputs for linguistic precision, especially in technical writing scenarios. This includes ensuring correct grammar, structure, and an appropriate tone, which is vital for specialized fields like legal tech.

- Sequential Tasks: Proficiency in tasks that require ordered steps is essential. Whether it's performing calculations, sorting datasets, or following a sequence of instructions, the Logic Engine ensures accuracy and completeness.

- Pattern Recognition: This involves identifying patterns within various inputs, be it text, data, images, or other multimedia forms. Recognizing patterns is crucial for tasks like data analysis or trend prediction.

- Common Sense: Even though the LLM operates based on patterns and training data, the Logic Engine attempts to exhibit a semblance of common sense, especially when specific background information might be lacking. It aims to provide outputs that are contextually reasonable and make general sense.

By integrating these elements, the Logic Engine is a sophisticated unit that not only processes information but does so with an emphasis on accuracy, reason, and context. It's the backbone that ensures the AI's outputs are grounded in logic and relevance, tailored to the user's needs.

Knowledgebase: The LLM's Information Repository

Beyond processing lies the LLM's vast knowledgebase, a treasure trove of information and facts. It's from this repository that the LLM fetches information when answering queries. This distinction between the knowledgebase and the processing unit highlights the balance between what the LLM knows and how it employs that knowledge.

Entities

These are the specific subjects, objects, or concepts contained within the knowledge base. They represent individual units of knowledge.

Definition: Entities could be anything from words and phrases to more complex concepts like businesses, technologies, or biological species. entities could range from individual words to phrases or even entire sentences.

Attributes: List the characteristics or features that define each entity. These attributes can be simple descriptors or more complex data points.

Relationships

The connections, interactions, or types of information between entities. It's the structure that links different pieces of knowledge together. Consider:

- Types of Relationships: These could include synonymy (words that mean the same thing), antonymy (words that mean the opposite), hypernymy (one word is a broader category of the other), etc.

- Directionality: Some relationships are one-way, others are mutual. Specify the direction of each relationship.

- Weight: The model's confidence score could serve as a weight, indicating the strength of the relationship between entities. For instance, the relationship between "dog" and "mammal" might have a higher weight than between "dog" and "pet."

Space Between Entities (Semantic Distance)

The vector space where these entities are mapped.

- Metrics: Think of this as counting the number of relationship "hops" between them or as complex as mathematical formulas.

- Dimensions: Consider multiple dimensions that could affect the semantic distance, such as time, context, or cultural factors.

In Action: A query like "Describe the relationship between photosynthesis and oxygen." Here, the knowledgebase identifies "photosynthesis" and "oxygen" as entities. The term "relationship between" specifies the relationship context. The AI then searches its Knowledgebase, retrieves relevant information about the connection between photosynthesis and oxygen, and formulates a coherent response.

It is important to understand that the Knowledgebase is the most malleable part of the LLM, knowledge can be injected quite easily into the context window through the prompt. However, engaging the different engines in the Processing Unit can be a bit more intensive and tricky, especially the logic and the creativity engine. This is where prompt engineering skills come un very handy.

The clear delineation between the knowledgebase and the "Processing Unit" underscores the separation between the information the AI has access to and the mechanisms it uses to process, interpret, and present that information. It's a balance between what the AI knows and how it uses that knowledge.

The Benefits of the SLiCK LLM Framework

This framework of segmenting an LLM's operations into a "Processing Unit" with inner components like the Syntax Engine, Creativity Engine, and Logic Engine, while maintaining an external "Knowledgebase", offers several benefits when approaching contemporary LLMs. Here's a quick rundown on some of the advantages and the problems it aims to solve:

Clearer Understanding of LLM Operations:

Benefit: By breaking down the LLM's operations into distinct components, it becomes easier to understand how the model processes prompts and generates responses.

Problem Solved: It demystifies the "black box" nature of AI, making it more transparent and understandable.

Focused Development and Improvement:

Benefit: With clear delineation of components, developers can target specific areas for improvement. For instance, if an LLM struggles with creativity, the Creativity Engine can be enhanced without affecting other components.

Problem Solved: It allows for more precise tuning and optimization of LLMs.

Enhanced Customizability:

Benefit: Users or developers could potentially adjust the emphasis on different components based on the task. For instance, a task requiring a lot of innovation might prioritize the Creativity Engine.

Problem Solved: It provides adaptability for diverse tasks and applications.

Better Error Diagnosis:

Benefit: If an LLM produces an undesired output, understanding its operations through these components can help pinpoint where the error occurred, be it in comprehension (Syntax Engine), creative interpretation (Creativity Engine), or decision-making (Logic Engine).

Problem Solved: It aids in troubleshooting and refining the model's performance.

Enhanced User Interaction:

Benefit: Users can craft prompts more effectively if they understand the LLM's operational components. Knowing how the model processes information can lead to more desired and accurate outputs.

Problem Solved: It elevates the user's experience and the quality of interactions with the LLM.

Clearer Distinction between Knowledge and Processing:

Benefit: By separating the "Knowledgebase" from the "Processing Unit", it becomes evident that while LLMs have access to vast information, how they use that information depends on their processing mechanisms.

Problem Solved: It addresses concerns about bias and knowledge limitations. The model's knowledge is static (based on the last training data), but its processing can be dynamic and adaptable.

Using and Expanding the SLiCK Framework

Segmenting the LLM's operations offers clarity, focused development, enhanced customizability, and improved user interaction. It demystifies AI, making it transparent and adaptable. This framework not only deepens our understanding of LLMs but also heralds a future of effective development and interaction, addressing challenges related to transparency and optimization. As we continue to explore the realms of AI, such frameworks will be instrumental in shaping our journey.

The SLiCK Box framework has already demonstrated its value in elucidating best practices for prompt engineering. As we apply it across use cases like prompt chaining, generative networks, and everyday LLM usage, we gain deeper insight into optimizing interactions with AI.

But this framework merely marks a starting point. Like the ever-evolving generative AI landscape, our comprehension must also continuously progress. I encourage you to utilize this framework, refine it through experience, and share improvements. Together we can advance a collective understanding of how to obtain the most benefit from AI in a transparent and ethical manner. The SLiCK Box lights the way forward.

Feel free to comment or speak with us directly on how you think we can tweak and improve this framework.