Practical AI Implementation Strategies

With recent advancements in artificial intelligence (AI), specifically large language models (LLMs) like GPT-3, organizations now have access to powerful tools that can transform workflows. However, simply deploying these models is not enough to achieve meaningful results. Strategic implementation is crucial for unlocking the true value of LLMs. In this article, we explore practical strategies for leveraging LLMs to drive organizational success.

Identifying High-Impact Use Cases

Rather than viewing LLMs as a cure-all, organizations should identify specific use cases where these models can offer significant value. Four particularly compelling applications include:

- Documentation Tools: LLMs redefine documentation, automating the creation and upkeep of technical information. They can parse existing documents, distill key insights, and craft comprehensive summaries, FAQs, and user manuals. This automation saves precious time and resources while ensuring clarity and consistency.

- High-Level Decision-Making: In the realm of decision-making, LLMs serve as invaluable allies. By sifting through vast datasets, they unveil trends, patterns, and insights, aiding leaders in navigating complex choices with a wealth of information at their fingertips.

- Code Review Tools: The coding world also benefits immensely. LLMs refine code review processes by spotting bugs, redundancies, and stylistic disparities, freeing developers to tackle more intricate challenges and bolster code quality and efficiency.

- Multimodal Decision-Making: LLMs' ability to integrate multifaceted information - text, images, audio - offers organizations a comprehensive perspective on intricate issues, fostering well-rounded, informed decision-making.

Notably, identified use cases should align with overarching organizational goals for maximum strategic impact.

Capturing the Right Data

Fueling LLMs' success requires investing in robust datasets. This involves aggregating relevant, high-quality data from across the organization and structuring it to power training and testing. Additionally, developing specific benchmark scenarios helps assess performance in priority contexts.

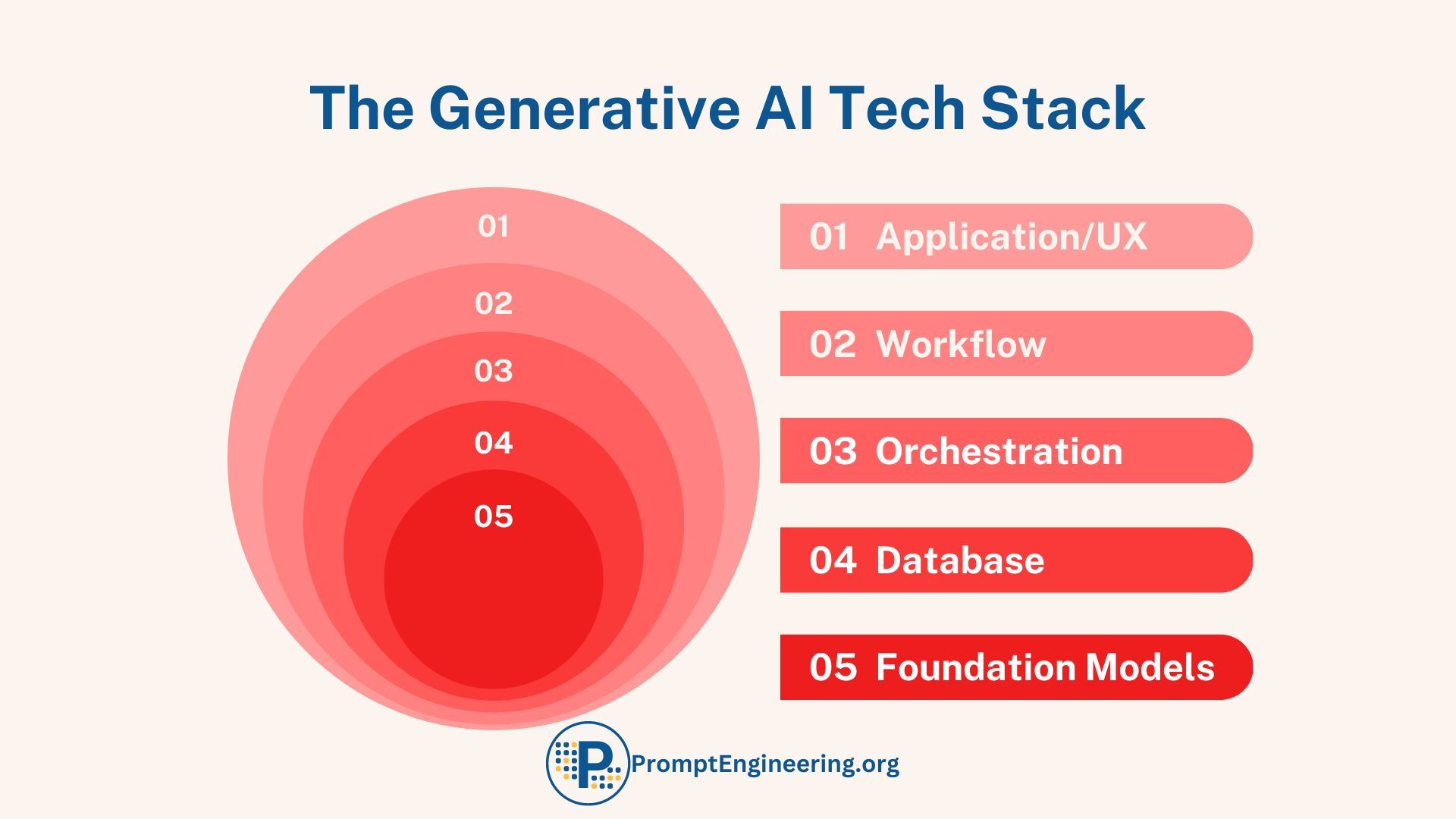

Adopting a Structured Implementation Framework

A systematic framework guides effective LLM adoption. Key components include data pipelines, model selection protocols, training procedures, deployment rules, and monitoring systems. This coordinated approach enables seamless integration and ongoing optimization.

Additionally, the framework should cover aspects like:

- Defining clear objectives and success metrics to track progress

- Conducting pilot studies focused on high-priority use cases before broad deployment

- Implementing staging environments for rigorously testing models and integrations

- Establishing model versioning procedures as capabilities rapidly advance

- Architecting with extensibility and future growth in mind

Furthermore, embracing agile principles allows adapting the framework as learnings emerge. Short iterative cycles enable promptly responding to user feedback and usage data.

Overall, a structured framework balances standardization with flexibility. Thoughtful design choices and agile delivery models empower introducing LLMs responsibly while maximizing organizational advantage. The framework acts as the blueprint for strategic integration guided by key metrics indicative of real-world impact.

Avoiding Common Missteps

To truly leverage AI's potential while avoiding pitfalls, organizations should:

- Align AI with existing workflows: AI should enhance, not disrupt, existing processes.

- Focus on specific tasks: LLMs are powerful but not universal solutions. Pinpoint tasks where they can add significant value.

- Understand limitations: Recognize the inherent constraints of LLMs and temper expectations accordingly.

- Manage expectations: Embrace incremental improvements rather than anticipating an immediate overhaul.

Takeaway

Strategically implementing LLMs - identifying high-value applications, capturing the right data, adopting structured frameworks, and avoiding common mistakes - unlocks transformative potential. Guided properly, these models can optimize workflows, augment human capacities, and ultimately confer organizational advantages. With responsible development, LLMs promise to serve as indispensable drivers of efficiency, innovation and competitive edge.

Comments