As Generative AI proliferates across industries, the emerging field of prompt engineering is becoming indispensable for successfully applying these technologies.

With generative AI expanding beyond chatbots into complex use cases, dedicated prompt engineering roles are needed to design, operate and maintain complex prompt and Generative frameworks that can meaningfully communicate with AI systems.

Just as human conversation requires finesse, so too does conversing with AI. To realize the full potential of generative AI, continued advancements in the art and science of prompt engineering will be critical.

A Quick Recap on Prompt Engineering

Understanding the Role of the Prompt

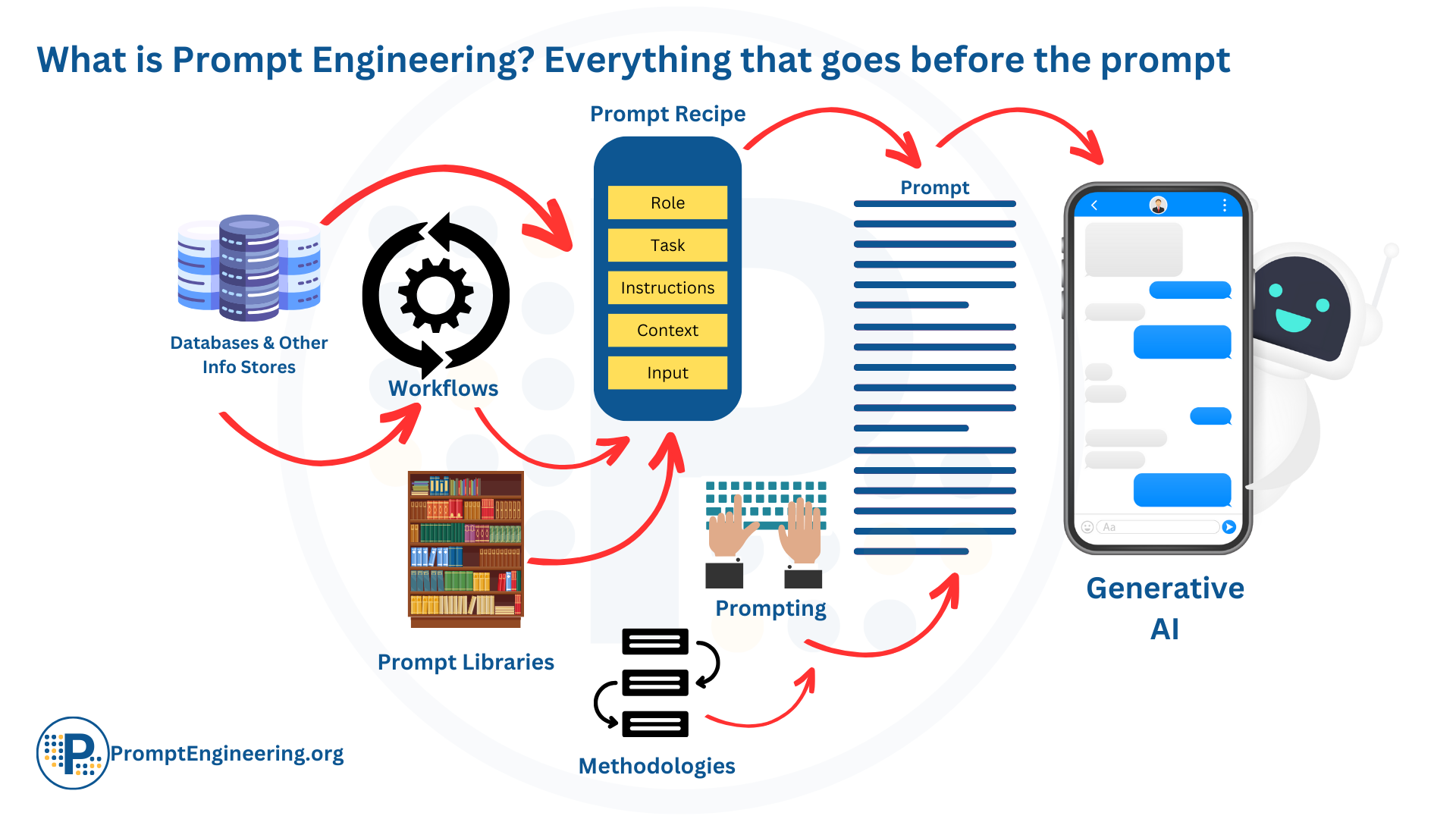

When we converse with advanced AI systems, especially Large Language Models (LLMs), the input prompt plays a critical role. It's the single piece of information that guides the AI's response. Hence, a lot of emphasis is placed on shaping this prompt meticulously.

LLMs, unlike humans, lack inherent reasoning or the ability to decipher vague instructions. Their entire communication is based on the prompt and its context. This highlights the importance of prompt engineering when leveraging LLMs to their full potential. Through well-designed prompts, we set the AI's potential for innovation and productivity, ensuring it operates within safe and desirable parameters.

The Iceberg Metaphor

Visualize the prompt as the visible portion of an iceberg. What we observe is a fraction of a much more extensive system. The prompt similarly is a representation of multiple underlying factors—like context, user objectives, and precise wording. All these aspects amalgamate to shape an effective prompt.

The Essence of Prompt Engineering

Prompt engineering stands at the intersection of technology and strategy, aiming to craft the ideal prompts that elicit desired responses from AI systems. This discipline dives deep into the creation of these optimized prompts, which entails:

- Grasping the strengths and weaknesses of the AI model.

- Clarifying the desired output's intention and boundaries.

- Using advanced techniques such as conversational prompts and prompt chaining.

- Leveraging resources like prompt libraries and specialized models.

- Integrating additional context via external retrieval mechanisms.

- Engaging in repeated refinement cycles to hone the prompt.

- Examining AI feedback to enhance future prompts.

- Designing user-friendly tools to streamline prompt formation.

Simply put, prompt engineering centralizes around the art and science behind the final instruction provided to an AI system. With the rapid advancements in AI, particularly generative AI, this domain is becoming increasingly pivotal. If there's an innovation that betters the quality of these instructions, it's a part of prompt engineering.

Comprehensiveness of Prompt Engineering

It's crucial to understand that prompt engineering isn't restricted to just drafting the text that interacts with the AI. It's a holistic approach that considers every element leading to that interaction. This involves recognizing user requirements, discerning the specific AI application, and being aware of the model's constraints. This often multi-stage, iterative process necessitates research, evaluation, and continuous enhancement to achieve perfection.

The Evolution of Prompt Engineering

From Simple Inputs to Advanced Interactions

In the inception stages, prompt engineering was rudimentary, typically involving just typing basic words or sentences into a chat interface to invoke AI responses. However, as the nuanced effects of phrasing, context, and instructions on output quality were discovered, the field witnessed rapid evolution.

Emergence of Prompting Techniques and Libraries

Initially, unique methods like few-shot prompting, conversational prompting, chain-of-thought, and role-playing were crafted individually for each AI interaction. As the potential for reusable patterns was realized, the concept of prompt recipes emerged. These templates were developed to standardize effective prompting strategies, eventually leading to the creation of curated prompt libraries for team-wide applications.

Fine-tuning LLMs and Addressing Challenges

Simultaneously, LLMs underwent fine-tuning tailored to custom applications, refining their response mechanisms and patterns. But, with their constrained knowledge cut-offs and detachment from reality, these models were susceptible to producing hallucinative responses.

Innovative Prompt Engineering Solutions

To counter these issues, the prompt engineering community pioneered methods to infuse dynamic facts and external knowledge into the models. This saw the rise of retrieval augmented generation that leveraged semantic search techniques and extensive knowledge bases.

From Optional Skill to Critical Competence

What began as a peripheral "nice-to-have" skill has matured into an essential capability for the safe and effective deployment of potent LLMs. As the AI realm expands and diversifies, the sophistication and importance of prompt engineering are set to rise in tandem, marking its undeniable significance in the AI world.

The Rising Importance of Prompt Engineering

The LLM Revolution in Tech Landscape

The technological landscape is witnessing a paradigm shift as Large Language Models (LLMs) make headway into myriad applications and platforms. With major tech giants launching and commercializing foundational models and Generative AIs (GenAI), a domino effect is observed where businesses, in a bid to remain cutting-edge, are rapidly integrating these advanced tools to elevate their offerings. A testament to this trend is a recent report by OpenAI, which revealed that a staggering 60% of AI-driven services now harness the power of LLMs^5^.

Challenges & Missteps in the LLM Era

However, as with any burgeoning technology, there are teething issues. While the capabilities of LLMs can be breathtaking, there have been instances of spectacular mishaps, such as those witnessed with ChatGPT and Bing in early 2023^2^. These 'fails' underscore the importance of having precise and thoughtful interactions with these models, leading to the emphasis on prompt engineering.

The Competitive LLM Marketplace

The AI market is gearing up for an influx of LLM variations as vendors scramble to capture a slice of this lucrative pie. While all these models will undoubtedly be potent, the differentiating factors will soon boil down to non-functional aspects. Parameters like size, licensing agreements, cost implications, and user-friendliness will take precedence in decision-making^2^.

Prompt Engineering: The Key to LLM Efficacy

In such a saturated market, the onus will be on organizations to not just select the right LLM but to harness it effectively. This is where prompt engineering comes into play. With LLMs being devoid of human intuition and common sense, the quality of output is directly proportional to the quality of the input, or the 'prompt'. Efficient prompt engineering ensures that interactions with these models are strategic, effective, and aligned with the desired outcome.

Advanced Prompt Engineering and Autonomous Systems

Shifting from Human-AI Conversations to Automated Systems

While the early forays into generative AI predominantly emphasized human-AI dialogue and content generation, there's a marked shift towards automation, relegating human involvement primarily to supervisory roles. Advanced workflow systems, epitomized by Generative AI Networks (GAINs) and Autonomous agents are now orchestrated to have AI agents carry out processes, requiring minimal human oversight. In this landscape, prompt engineering stands as the cornerstone to bring these automated processes to fruition.

Redefining Prompting for Automation

To effectively harness these autonomous systems, prompt engineering must undergo transformation. Engineers will be tasked with crafting recipes and frameworks tailored for:

- Autonomous Prompting: Crafting prompts autonomously based on structured data and predefined schemas.

- AI-driven Iterative Refinement: Using reinforcement learning, AI agents will be entrusted with the iterative refinement of prompts.

- Feedback Loops: Integrating generated outputs back into the system as foundational training data.

- Continuous Monitoring and Evaluation: Actively assessing the efficacy of prompts and making data-driven refinements.

- Human-AI Handoffs: Strategically determining the junctures where humans step in to assume control or oversight.

Rethinking Prompt Strategies for Industrial Applications

The strategies that have served us in conversational AI contexts might fall short in automated workflows. The onus is on prompt engineering to recalibrate its techniques, ensuring they're in line with the exigencies of industrial-scale AI applications.

The Future of Prompt Engineering in Automation

The integration of generative AI across industries and societal facets signifies that prompt engineering's role is evolving. It's transitioning from merely facilitating human-AI interplay to being the linchpin in fully automated, AI-driven operations. Mastery in prompt engineering tailored for these automated workflows is not just beneficial; it's imperative for organizations to tap into the boundless potential of AI-led operations.

The Enduring Need for Prompt Optimization

The Art and Science of AI Communication

As AI systems continue to push the boundaries of capability, the refinement of how we interact with them becomes ever more paramount. Analogous to the intricacies of human dialogue, conversing with AI systems is laden with subtleties.

The Power of Prompting

The precision in how we formulate prompts – selecting the right diction, establishing the structure, and offering relevant context – has a profound influence on the richness and applicability of AI responses. The myth that prompts can be universally applied is just that – a myth.

The Need for Customization

AI, with its diverse architectures, myriad applications, and an array of desired outcomes, demands a personalized touch in prompting. Zeroing in on the ideal prompt for any given scenario entails a mix of trial, error, and continuous learning tailored to individual use cases.

The Limitations of AI's Intuition

Despite their burgeoning capabilities, AI models are not clairvoyant. They can't intuit human intention out of thin air. This underscores the significance of meticulously designed prompts as the conduit for harnessing their prowess both securely and productively.

The Timelessness of Effective Communication

Drawing a parallel to the art of writing, which has remained invaluable despite the vast epochs of human interaction, prompt engineering stands out as a continually evolving discipline. It’s the bridge that ensures fruitful human-AI collaborations. As technology and our understanding of AI mature, the quest for refining and optimizing prompts will persist, underlining its timeless relevance in the AI landscape.

Injecting Facts and Constraints via Prompts

Guiding AI with Rich Information

One of the fundamental advantages of prompt engineering lies in its capacity to infuse AI models with pertinent external data. Through skillfully constructed prompts, models can be endowed with essential facts, numerical data, constraints, and contextual insights that can shape their responses in a targeted manner.

Grounding AI in Reality

While AI models, especially the more sophisticated ones, possess vast computational power, they often fall short in real-world awareness and human-like common sense. Through effective prompt engineering, these models can be anchored to tangible reality, thus ensuring outputs that are both accurate and contextually apt.

Prompt Elements: From Facts to Logic

Effective prompts can incorporate a wide array of information:

- Domain-Specific Information: Tailoring responses based on specialized fields, like presenting a medical diagnosis grounded in current clinical knowledge.

- Safety Parameters: Embedding guidelines within prompts ensures AI outputs remain consistent with human values and societal norms.

- Reasoning Frameworks: Providing the AI with foundational logical constructs to guide its deductions and conclusions.

- External References: Connecting the AI to external databases can facilitate real-time access to updated information, enriching its responses.

Harnessing AI Responsibly with Prompts

Injecting such knowledge into AI via well-curated prompts mitigates risks associated with misinformation or off-tangent outputs. Instead of wandering into the realm of baseless speculation or erroneous claims, models can become reservoirs of dependable and insightful information.

Synchronizing with AI Evolution

As AI technology continues its rapid trajectory of advancement, the science of prompt engineering matures in tandem. This simultaneous growth ensures that as AI becomes more potent, our ability to control and direct its capabilities becomes equally sophisticated. The art of crafting precision-driven prompts will undoubtedly serve as the lynchpin for extracting maximum utility from these ever-evolving systems.

The Complexity of Human Communication

The Timelessness of Communication Mastery

Despite millions of years of human interaction, the art of communication remains ever elusive. Transferring ideas between minds, whether they be complex concepts or fleeting emotions, is no trivial matter. This enduring complexity elucidates why subjects like writing, linguistics, and rhetoric continue to draw enthusiasts, even with our age-old history of language proficiency.

The AI Communication Paradox

Interfacing with Artificial Minds

Consider the intricatcies of human communication, laden with shared cultural nuances, implied meanings, and emotional undertones. Now, juxtapose this with the world of AI — beings devoid of our inherent grasp of these subtleties. The challenge of meaningful interaction with such entities, which interpret things quite literally, is inherently immense.

Humanizing Machine Interaction

Yet, in a twist of ambition, we find ourselves striving to converse with AI entities as though they were our peers — understanding, empathetic, and intuitive. This ambition underscores the paramount importance of prompt engineering. It's the linchpin that transforms AI from mere calculative entities to beings capable of 'meaningful' interaction. Absent the context and nuance embedded in expertly engineered prompts, even the mightiest of AI models might flounder.

The Evolving Conversation

As human communication continues its evolutionary journey, adapting and refining with changing times and technologies, so too does the field of prompt engineering. This discipline, though nascent, is already pivotal. And, as the AI frontier continues to expand, the need for prompt engineering — ensuring our machines understand us just right — will remain ceaselessly crucial. For as long as humans aspire to interact with non-human intelligences, honing the art of crafting precise, meaningful prompts will be an enduring endeavor.

Final Words

As AI systems, particularly LLMs, become ubiquitous across industries and applications, the art of prompt engineering emerges as a pivotal facet of human-AI interaction. Tracing its evolution from simple textual inputs to sophisticated techniques accommodating autonomous agents, and understanding the nuances of human communication, the article underscores the indispensable role of prompts in harnessing the full potential of AI.

Grounding these systems in reality, while adapting to their complexities, remains an ever-evolving challenge. But with diligent attention to prompt optimization and understanding the intricacies of communication, we are poised to make the most of this transformative technology. The future of AI interaction is not just about advanced models; it's about the refined art of communicating with them.